Applicant NPS measures something the National Student Survey never reaches: the experience of every person who considered your institution but did not enrol. That is, statistically, the vast majority of your prospective students — and they leave no feedback unless you ask.

This guide explains how to instrument applicant NPS across the admissions funnel, which tools are genuinely fit for UK higher education, and how to turn scores into enrolment decisions rather than dashboard decoration.

Why applicant NPS is not the same as student NPS

The NSS is the most widely cited satisfaction measure in UK higher education. It is administered annually to final-year undergraduates and informs the Teaching Excellence Framework (TEF) and league table positions in the Guardian University Guide and the Complete University Guide. It is a robust instrument — for enrolled students.

The problem is that it captures none of the journey that precedes enrolment. Prospects who found your open day chaotic, your application portal confusing, or your communications tone-deaf simply choose another institution. They do not file a complaint. They do not leave a review. They vanish.

91% of website visitors leave without providing contact details — schools using an AI chatbot reduce first-contact drop-off from 91% to 76% (Source: Skolbot funnel analysis, 30 schools, 2025-2026). Every percentage point of that invisible dropout represents a prospective student whose dissatisfaction you never measured and therefore cannot act on.

Applicant NPS closes that feedback loop before the UCAS deadline. It applies the same Net Promoter logic — a single likelihood-to-recommend question on a 0–10 scale — but directs it at touchpoints in the recruitment journey: the open day experience, the application process, the quality of communications. The object of recommendation is not the institution as a whole, but the specific interaction that just occurred. That precision is what makes it actionable. For a broader view of how satisfaction metrics fit together across the full funnel, see our guide to measuring prospect satisfaction across the admissions funnel.

The 4 key touchpoints for NPS measurement

Timing determines response rates more than survey design does. Deploying an NPS survey 10 days after an interaction yields a fraction of the feedback you would collect within 24 hours. The table below maps the four highest-value moments in the UK admissions cycle to appropriate triggers, question wording, and realistic response rates.

| Moment | Trigger | Adapted NPS question | Average response rate |

|---|---|---|---|

| After first website visit | Exit intent or session >3 min | "How likely are you to recommend our university to a friend considering applying?" | ~8% |

| After open day / offer holder day | Email or SMS, day after the event | "How likely are you to recommend our open day to a friend in the same position?" | ~22% |

| After submitting application | Confirmation email, sent immediately | "How would you rate the experience of applying to us?" | ~31% |

| After a rejection decision | Post-decision email, sent with the outcome | "Despite our decision, would you recommend us to a friend thinking of applying?" | ~14% |

The post-application touchpoint consistently returns the highest response rates because the emotional investment is highest at that moment. Post-rejection surveys feel counterintuitive, but they are disproportionately valuable: a detractor (score 0–6) at this stage will actively advise peers against applying, while a passive or promoter score indicates that your communications and process were perceived as fair regardless of outcome.

For a full account of where these moments sit in the wider recruitment journey, see our article on the ideal prospect journey to enrolment.

Comparison: 5 tools for measuring applicant NPS

No single tool was designed specifically for UK higher education applicant NPS. Each of the five options below has been evaluated against the criteria that matter most for an admissions team: deployment speed, response friction, UK GDPR/ICO compliance, and HE-specific configuration capability.

| Tool | Type | Strengths | Limitations | Approx. price |

|---|---|---|---|---|

| Typeform | General survey | Elegant UX, strong CRM integrations (HubSpot, Salesforce), conditional logic | Not NPS-optimised by default; costs escalate at volume; no HE-specific templates | From ~£25/month |

| SurveySparrow | Conversational survey | Chat-style format increases completion rates; open APIs; recurring survey automation | Expensive for smaller institutions; limited UK HE configuration out of the box | From ~£19/month |

| Retently | Dedicated NPS platform | Purpose-built NPS workflows, detractor alerts, sector benchmarks; integrates with most CRMs | Primarily B2B-focused; limited adaptations for HE applicant journeys; USD pricing | From ~$25/month |

| Hotjar | On-site micro-surveys | No email required; captures feedback at the website stage; free tier available | Not native NPS; limited to website interactions; 35 sessions/day on free plan | Free up to 35 sessions/day |

| AI Chatbot (e.g. Skolbot) | Conversational AI platform | NPS question embedded naturally in conversation flow; zero additional friction; contextual follow-up question built in | Requires chatbot deployment on your site; not a standalone survey tool | Included in platform |

On benchmarks: Retently's 2025 NPS data places the education sector median at +40 to +55, though this figure reflects enrolled student populations rather than applicants. UK universities typically score +35 to +50 in the Guardian student satisfaction data. No public benchmark currently exists for applicant or prospect NPS — which means your internal trend data, tracked quarterly across the UCAS and Clearing cycle, becomes your most reliable reference point.

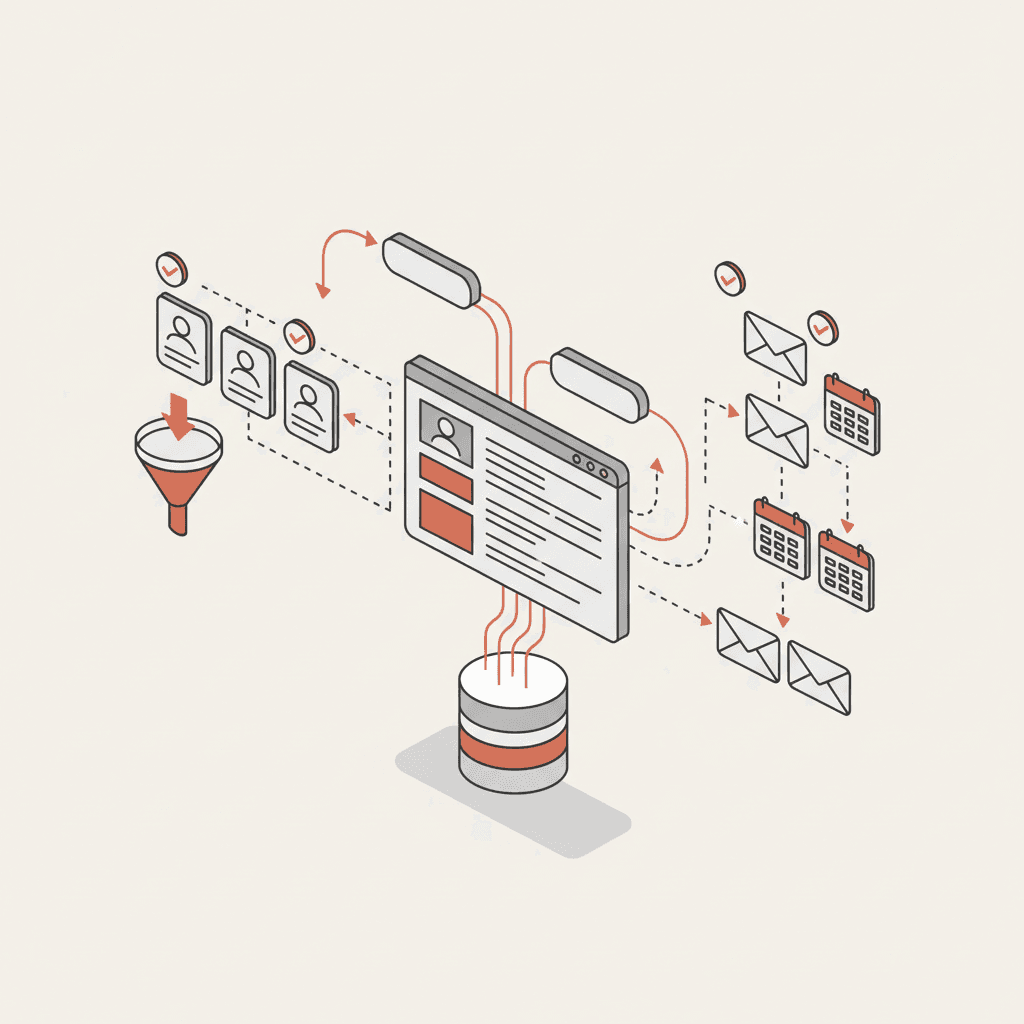

The AI chatbot approach warrants particular attention for admissions teams already considering conversational tools. Because the NPS question is embedded within an existing interaction — a query about entry requirements, for example — the prospect is already engaged. The response rate is structurally higher than a standalone post-visit email survey. JISC research on digital experience in higher education consistently points to friction reduction as the primary driver of student engagement with institutional digital tools; the same principle applies to survey completion.

Methodology — adapting the NPS question for admissions

The standard NPS question ("How likely are you to recommend [organisation] to a friend or colleague?") was developed by Bain & Company for B2B customer relationships. Transposing it directly to HE applicant surveys produces ambiguous data, because the object of recommendation is unclear: the institution? The programme? The application process? The open day?

Four adaptations make applicant NPS substantially more useful.

Specify the object of recommendation. "How likely are you to recommend our open day to a friend considering applying?" produces cleaner, more actionable data than "How likely are you to recommend us?" Each touchpoint survey should have its own focused question rather than a generic institutional one.

Follow with one open-ended qualitative question. "What was the main reason for your score?" A short text field after the 0–10 rating converts a score into a verbatim you can act on. This is where prospects tell you that the car park was full, that the programme director was difficult to understand, or that the confirmation email arrived three days after the event. Numbers describe the size of the problem; verbatims locate it.

Prioritise follow-up with detractors within 48 hours. A score of 0–6 from a prospect who attended your offer holder day and has not yet confirmed their place is a conversion risk. A personal follow-up email — not an automated sequence — sent within 48 hours recovers a measurable share of those at-risk enrolments. This connects directly to yield management: see our article on yield management for student enrolment for the wider conversion framework.

Track no-show rates alongside NPS scores. The two metrics are causally linked. No-show rates at open days fall to 19% for prospects who received a personalised chatbot follow-up, compared to 52% with no follow-up (Source: Tracking of 4,200 open day registrations, Oct 2025 – Feb 2026). A low post-registration NPS score is a leading indicator of no-show risk, not just a retrospective measure of dissatisfaction.

Analysing and acting on your applicant NPS

A score is only useful if it drives a decision. The four analysis habits below are what separate admissions teams that use NPS as a reporting metric from those that use it to change recruitment outcomes.

Segment by recruitment channel. An NPS of +28 from prospects who arrived via Instagram ads and +52 from prospects who found you through the UCAS search tool are not the same number averaged together — they are two separate signals about two separate audiences with different expectations. Channel-level NPS data directly informs your media budget allocation decisions. For a structured approach to channel attribution, see our guide to unanswered prospect questions and what they cost schools.

Cross-reference with conversion rates. Map NPS scores at each touchpoint against the conversion rate to the next funnel stage. A high post-visit NPS that does not correlate with application rates suggests the issue is not experience quality but programme fit or competitive positioning. A low post-open-day NPS that does correlate with application drop-off is a direct operations problem.

Track quarterly across the Clearing cycle. Applicant NPS naturally fluctuates across the admissions calendar. January scores reflect carefully planned offer holder days. August Clearing scores reflect a team under pressure handling volume it was not resourced for. Comparing January to August without controlling for context produces misleading trend lines. Segment by cycle stage before drawing conclusions.

Publish scores internally alongside conversion data. NPS improvement without conversion improvement is a vanity metric. NPS deterioration alongside stable conversion rates suggests you are retaining applicants despite a poor experience — a position that is fragile and likely to worsen as word of mouth propagates. For context on how online reputation interacts with recruitment, see our article on Google reviews and school reputation. Detailed frameworks for acting on satisfaction data across the full funnel are covered in measuring prospect satisfaction across the admissions funnel.

FAQ

What is the difference between student NPS and applicant NPS?

Student NPS measures loyalty among enrolled students — it is closely related to NSS outcomes and institutional reputation. Applicant NPS measures the experience of prospective students during the recruitment journey, before any enrolment decision is made. The two populations have different experiences, different emotional stakes, and different propensities to recommend. They require separate measurement instruments and separate benchmarks.

How often should I survey prospects with NPS?

Survey at each significant touchpoint rather than on a fixed calendar schedule. The four key moments — first website visit, open day, application submission, and post-decision — each carry their own signal. Avoid surveying the same prospect more than twice in a three-month window; survey fatigue degrades both response rates and data quality.

What constitutes a good NPS for university applicants?

No peer-reviewed public benchmark exists for applicant NPS in UK higher education. As a working reference, institutions using dedicated NPS tooling typically report applicant scores between +20 and +45, with open-day-specific scores at the higher end of that range when the event is well organised. The most valuable benchmark is your own institution's quarter-on-quarter trend, not a sector average.

Can NPS measurement be integrated into a chatbot?

Yes. An AI chatbot can embed the NPS question at the natural close of a conversation — after answering a query about entry requirements or funding options, for example. This approach eliminates the need for a separate survey email, captures the score at peak engagement, and enables the follow-up qualitative question within the same conversational thread. Response rates via this method are typically higher than post-interaction email surveys because friction is lower and the context is immediate.

Does ICO/UK GDPR allow collecting NPS data from prospects?

Yes, provided you have a lawful basis for processing. For most institutions, legitimate interests is the appropriate basis — collecting feedback to improve the applicant experience is a proportionate purpose that a reasonable prospect would expect. You must include NPS data collection in your privacy notice, give prospects a clear opt-out mechanism, and not retain scores beyond the period necessary for the stated purpose. If your prospects include under-18 applicants — common in sixth-form or foundation year recruitment — you must apply the ICO's children's data standards. For a comprehensive treatment of data compliance in prospect communications, see our guide to Gen Z expectations for school websites.

Book a personalised demo