Why a generic chatbot does not convert in higher education

A chatbot that opens with "Hello, how can I help you?" when a prospect is comparing three business schools will not convert anyone. The problem is not the technology — it is the absence of scenarios designed for the specific journey of a student prospect.

The data confirms it: 91% of visitors to an institution's website leave without first contact (Source: Skolbot funnel analysis, 30 institutions, 2025-2026 cohort). Not because the information is missing from the site. Because it arrives too late, in the wrong format, or without a trigger for action.

A high-performing AI chatbot in education works like an automated admissions adviser. It detects intent, adapts its response, and pushes the prospect towards the next step in the funnel — Open House registration, application submission, brochure request. For an overview of the chatbot's role in recruitment, see our complete AI chatbot guide for higher education.

This article details five proven scenarios, each with its conversational flow, measured results, and a sample dialogue. These are not hypotheses: they are scenarios deployed in the field, measured across dozens of partner institutions.

Scenario 1: The automated FAQ that frees your admissions team

The problem

72% of prospect questions are simple FAQs (fees, dates, admissions), 21% require institution-specific context, and only 7% need a human (Source: automated classification, 12,000 Skolbot conversations, 2025). Your admissions team spends its days on that routine 72% instead of supporting the 7% of complex cases.

How does it work?

The chatbot is trained on your content: programme pages, fee schedules, FAQ pages, PDF brochures. It responds in 3 seconds, where an email takes 47 hours and a contact form takes 72 hours (Source: Skolbot mystery shopping audit, 80 institutions, 2025).

Prospect: "How much is the tuition for the Master in Digital Marketing?"

Chatbot: "Tuition fees for the Master in Digital Marketing are CAD 12,500 per year. You can reduce this through a work-study programme: in that case, fees are covered by the host employer. 78% of our Master students are on work-study placements. Would you like to learn more about work-study options, or would you prefer to visit the campus at our next Open House on 15 April?"

The results

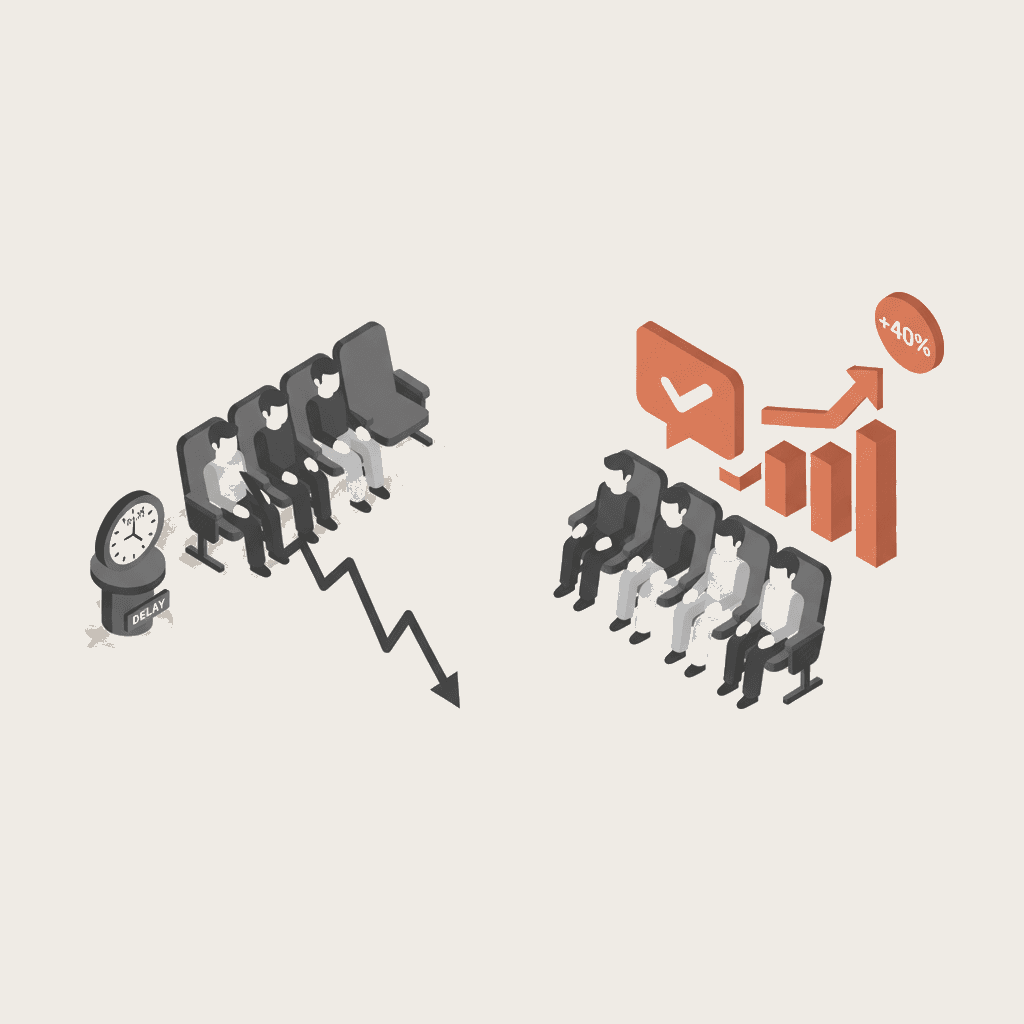

- Bounce rate: from 68% to 41% (−40%) (Source: A/B test, 22 institution websites, Sept — Dec 2025)

- Session duration: from 1 min 45 s to 4 min 12 s (+140%)

- Pages per visit: from 1.8 to 3.4 (+89%)

The 89% of prospects who ask about tuition fees get an immediate answer instead of leaving the site to search elsewhere.

Scenario 2: Real-time Open House registration

The problem

Open Houses remain the decisive moment in the enrolment decision. A static form waits for the prospect to find and complete it — that converts at 6.2%. The chatbot does the opposite: it detects interest and proposes registration at the exact moment the prospect is engaged.

How does it work?

The chatbot detects intent signals during the conversation. A prospect who asks successive questions about tuition fees (89% of conversations), career outcomes (84%), and work-study options (78%) signals serious interest — that is the moment to propose the Open House.

Registration happens within the conversation, with no redirect to an external form:

Chatbot: "You're asking great questions about the programme. The best way to discover the campus and meet the teaching staff is at our next Open House on 15 April. I can register you in 30 seconds — what's your first name?"

Prospect: "Marie"

Chatbot: "Thanks, Marie. What email address should I send the confirmation to?"

Prospect: "marie.dupont@gmail.com"

Chatbot: "Done. Will you be coming alone or with family? I can reserve a place for your parents too."

This scenario is detailed step by step in our article on automated Open House registration via chatbot.

The results

The Open House registration rate via chatbot reaches 18.4%, compared to 6.2% by standard form and 4.8% by email (Source: UTM tracking + multi-touch attribution, 2025-2026 season, 35 institutions). That is a 3x multiplier over the standard form.

The chatbot then manages automated follow-up:

- D-7: personalised message with a reminder of the programme of interest

- D-1: actionable reminder with address, directions, and the name of the programme lead

- D+1: post-Open House follow-up (application prompt for attendees, rescheduling for no-shows)

Result: no-show rates drop from 52% without follow-up to 14% with chatbot + SMS (Source: tracking of 4,200 Open House registrations, 12 institutions, Oct 2025 — Feb 2026).

Scenario 3: Re-engaging dropped prospects

The problem

Even after a first contact, 64% of prospects abandon before applying (Source: funnel analysis, 30 institutions, 2025-2026 cohort). Without a chatbot, a prospect who visits the MBA page on a Tuesday evening, hesitates, and leaves is lost: no email, no usable trace.

How does it work?

The chatbot captures the prospect before they leave. If they started a conversation, the chatbot has their first name, email, and programme of interest. If the prospect returns to the site without completing the journey, the chatbot recognises them and resumes the conversation where it left off.

Chatbot: "Welcome back, Sophie. Last time, you were looking at the Bachelor in Communication. You asked about international placements. Since then, we've published a testimonial from Clara, a third-year student who did her placement at Ogilvy in London. Would you like to read it, or would you prefer to move forward with your application?"

The re-engagement flow:

- Return detection: the chatbot identifies a known visitor returning

- Contextualisation: it recalls the topics of the previous conversation

- Personalised content: it offers a new element (testimonial, news, Open House date) related to the identified interest

- Next action: it pushes towards the next funnel step

The results

Data shows that 34% of prospects who interacted with the chatbot return within 7 days, compared to only 12% without a chatbot — a 2.8x multiplier (Source: Skolbot cohort analysis, 8,000 sessions tracked over 90 days, 2025).

A generic follow-up email achieves a 4-6% open rate. A contextual chatbot message — recalling the programme and proposing a concrete action — re-engages far more effectively. Detailed results are documented in our composite case study.

Scenario 4: Prospect qualification and routing

The problem

A grade 12 student comparing five schools does not have the same needs as a career-changer looking for an MBA. Yet most websites treat them identically: a single form, a generic email, an undifferentiated queue.

How does it work?

The chatbot qualifies each prospect through the conversation, without the prospect feeling they are filling in a form. Qualification questions are woven in naturally:

Chatbot: "To point you in the right direction, I have a few quick questions. Are you currently studying or working?"

Prospect: "I'm in my final year of grade 12"

Chatbot: "Great. What type of programme interests you: a 3-year Bachelor's, or a direct 5-year post-secondary programme?"

Prospect: "A Bachelor's, ideally with a work-study placement"

Chatbot: "Our Bachelor in Business & Marketing with work-study is our most popular programme for profiles like yours. Fees are covered by the employer. Would you like me to connect you with Ms. Tremblay, the Bachelor programme director? She can answer your specific questions and guide you through the application."

Routing is based on four criteria:

- Current education level (secondary student, undergraduate, working professional)

- Programme of interest (Bachelor's, Master's, MBA, continuing education)

- Preferred mode (full-time, work-study, part-time)

- Prospect maturity (exploring, comparing, ready to apply)

The results

- Qualified leads per month: from 120 to 195, i.e. +62% (Source: median results, 18 institutions, 2024-2025)

- Cost per lead: from CAD 55 to CAD 34, i.e. −38%

Integration with CRMs such as Salesforce Education Cloud or HubSpot is essential: the chatbot automatically pushes the enriched profile (programme, level, questions asked, maturity score) into your existing pipeline.

Scenario 5: Multilingual welcome for international prospects

The problem

Your site is in English, your admissions team speaks English, and 58% of your international prospects are not native English speakers (Source: language detection, 8,500 Skolbot conversations, 2025-2026). The breakdown: French (28%), Spanish (11%), Arabic (7%), Portuguese (4%), Mandarin (3%), German (2%). Asking them to navigate a site in a language they are not fluent in and send an email — hoping for a reply in their language within 72 hours — means losing them before first contact.

How does it work?

The chatbot automatically detects the prospect's language from the very first message and responds in that language, with no manual configuration.

Prospect: "Quels sont les frais de scolarité pour le Master en Commerce International ?"

Chatbot: "Les frais de scolarité pour le Master en Commerce International sont de 9 200 EUR par an. Des bourses sont disponibles pour les étudiants internationaux — 23 % de nos étudiants en Master bénéficient d'une bourse au mérite. Souhaitez-vous des détails sur le processus de candidature, ou préférez-vous assister à notre prochaine Journée Portes Ouvertes le 15 avril ? Je peux vous inscrire immédiatement."

The chatbot handles more than 30 languages. If the conversation starts in Spanish, follow-ups are sent in Spanish. This scenario combines with the previous four: a French-speaking prospect can ask an FAQ question (scenario 1), register for an Open House (scenario 2), be re-engaged (scenario 3), and qualified (scenario 4) — all in their language.

The results

The first-contact rate for international prospects triples with a multilingual chatbot. GEO visibility scores show that institutions with structured data and a multilingual chatbot achieve on average +12 points of AI visibility compared to monolingual institutions (Source: Skolbot GEO monitoring, 500 queries x 6 countries x 3 AI engines, Feb 2026).

The cost of acquisition for international students from outside Europe ranges between CAD 4,200 and CAD 6,000 (Source: estimates from EAIE, StudyPortals, EAB, Universities Canada). Each international prospect recovered through the multilingual chatbot represents a direct saving on that acquisition cost.

Comparative table: impact and effort by scenario

| Scenario | Primary impact | Key metric | Implementation effort |

|---|---|---|---|

| Automated FAQ | Site engagement | Bounce rate: −40% | Low (auto-scraping) |

| Open House registration | Direct conversion | Registration rate: 18.4% vs 6.2% | Low (dates + locations) |

| Dropout re-engagement | Prospect retention | 34% return within 7 days (vs 12%) | Medium (sequences to configure) |

| Prospect qualification | Admissions productivity | Qualified leads: +62% | Medium (routing criteria) |

| Multilingual welcome | International recruitment | 58% of non-native prospects engaged | Low (enabled by default) |

The recommended deployment order: start with the FAQ (immediate impact, zero configuration) and Open House registration (direct conversion). Then add qualification and re-engagement. Multilingual support is enabled by default with Skolbot — no additional effort required.

To calculate the financial return of these scenarios for your institution, use our student chatbot ROI calculation method.

FAQ

Do you need to deploy all 5 scenarios at once?

No. The automated FAQ and Open House registration can be activated from day one. Qualification requires defining your routing criteria with the admissions team (half a day). Dropout re-engagement needs a week of data to calibrate the sequences. Multilingual support is native and requires no configuration. Progressive deployment is the best approach: measure each scenario's impact before activating the next.

Which scenario has the greatest impact on enrolment?

Real-time Open House registration produces the most direct and measurable impact: an 18.4% rate via chatbot versus 6.2% via form, a 3x multiplier. Combined with anti-no-show follow-up (14% absence versus 52% without follow-up), it is the scenario that converts the most prospects into campus visitors — and campus visitors into enrolled students. According to Gartner, conversational AI agents will handle 80% of first-level interactions in higher education by the end of 2026.

Can a chatbot really qualify a prospect as well as a human?

The chatbot qualifies better on objective criteria (level, programme, mode) because it asks the questions systematically, without forgetting a criterion and without bias. However, it does not replace human judgement on the 7% of complex cases — uncertain motivation, particular personal circumstances, exception requests. That is why scenario 4 includes a smooth handover to the right contact, with the full conversation history. According to HubSpot, companies that qualify leads via chatbot before human handover reduce their sales cycle by 33%.

Do these scenarios work for all types of institution?

The metrics cited are medians observed across a diverse panel: business schools, engineering schools, communication schools, private universities. Conversion rates vary by institution type — for example, computing schools convert at 5.2% compared to 1.8% for communication schools (Source: Skolbot analysis, 50 institutions, 2025-2026). But all five scenarios apply universally. The conversational flows are configured on each institution's data, not on a generic template.

Every prospect who leaves your site without an answer is a potential student lost. These five scenarios are not theoretical — they are running today across dozens of institutions, with measured and documented results.

Test your school's AI visibility for free Test these scenarios on your institution in 30 secondsCompare solutions: AI Chatbot Comparison for Higher Education