Eight mistakes that undermine student chatbot projects before they begin

UK higher education institutions are deploying student chatbots faster than ever — but deployment speed and deployment quality are not the same thing. The most common chatbot failures in admissions are not caused by poor technology. They are caused by predictable, avoidable decisions made before and after go-live.

This article documents the eight mistakes admissions and digital teams make most often, drawing on data from chatbot deployments across UK institutions. Each mistake is paired with the concrete fix. Whether you are mid-procurement or six months post-launch, this is your diagnostic checklist.

For the foundational context, see our complete guide to AI chatbots in higher education.

The 8 mistakes: a quick reference

Before the detail, here is the full map of where deployments fail — and when each mistake typically surfaces.

| # | Mistake | When it bites | Impact |

|---|---|---|---|

| 1 | Incomplete knowledge base at launch | Week one | Unanswered questions, prospect drop-off |

| 2 | No escalation path to human advisors | Throughout cycle | Lost applicants, reputational risk |

| 3 | Ignoring analytics after go-live | Month 2 onwards | Stale performance, missed optimisation |

| 4 | Internal-only testing before launch | Day one | Systematic blind spots in coverage |

| 5 | No CRM integration | Throughout cycle | Data silos, broken recruitment funnel |

| 6 | Irregular knowledge base maintenance | Mid-cycle | Wrong fees, wrong deadlines, lost trust |

| 7 | Underestimating UK GDPR/ICO requirements | Anytime | Legal exposure, prospect data at risk |

| 8 | Not training admissions staff on the hybrid model | Immediately post-launch | Advisor confusion, handoff failures |

Mistake 1: Launching with an incomplete knowledge base

The chatbot goes live before the knowledge base covers the questions prospective students actually ask. The result: the chatbot returns unhelpful responses on the queries it encounters most, exactly when prospects are evaluating whether your institution is worth applying to.

Why it happens. Knowledge base preparation is underestimated at procurement stage. Teams assume the chatbot will "learn" from conversations over time. It will — but during the learning period, real prospects are receiving inadequate answers.

The data. 72% of prospect questions are standard FAQ-type queries that a chatbot should handle without escalation (Source: automated classification of 12,000 Skolbot conversations, 2025). Tuition fees, entry requirements, UCAS application deadlines, funding and bursaries, accommodation, open day dates — these questions are predictable. If the chatbot cannot answer them at launch, you have deployed before you were ready.

The fix. Build the knowledge base before you go live, not after. Gather your ten most-asked questions from the admissions inbox, your programme pages, UCAS profiles, fee schedules, and open day landing pages. Validate each answer with the admissions team. Run the chatbot against those ten questions in staging. If the resolution rate is below 80%, delay go-live.

For a structured approach to technical integration, see our guide on how to integrate an AI chatbot into your school website.

Mistake 2: Skipping escalation paths to human advisors

A chatbot without a structured handoff to a human advisor is not a recruitment tool — it is a deflection tool. Prospects who cannot get an answer and cannot reach a person do not wait. They leave.

Why it happens. Escalation is treated as optional configuration rather than a core requirement. The deployment brief focuses on the chatbot's capability, not on what happens when it cannot help.

The consequences. Admissions edge cases — non-standard qualifications, RPL routes, mature student applications, disability disclosure — all require a human who can exercise judgement. A chatbot that attempts to automate these responses creates inaccurate guidance and potential compliance risk under QAA standards for academic information. Emotional distress signals — prospects expressing anxiety about funding, grades, or personal circumstances — must trigger escalation immediately. There is no acceptable automated response to a student in difficulty.

The fix. Configure a minimum of five escalation triggers before go-live: financial hardship signals, non-standard qualification routes, emotional distress language, repeated failed resolution (more than three exchanges without a satisfactory answer), and any direct request for human contact. Every trigger should pass a full conversation transcript to the receiving advisor — not an email notification with a reference number.

For a detailed breakdown of escalation design, see our article on AI chatbot vs human agent.

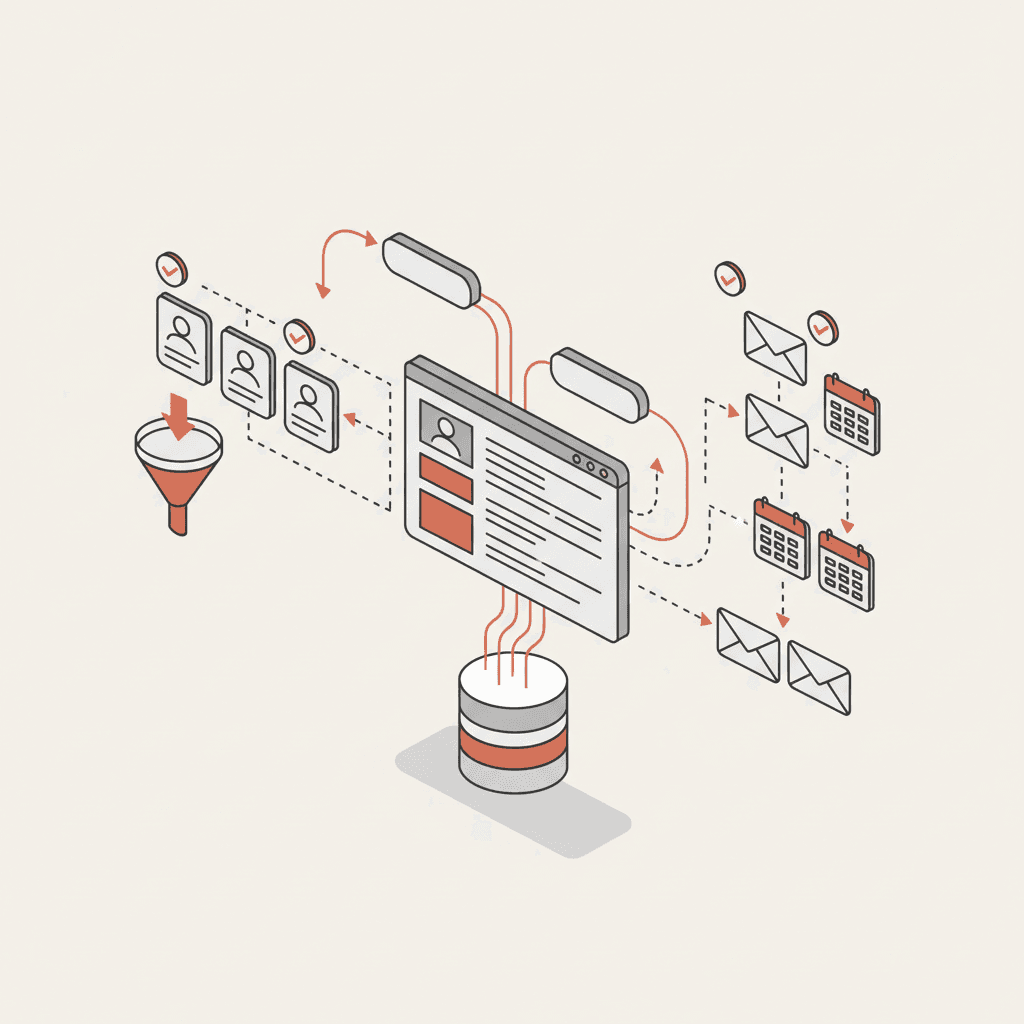

Mistake 3: Ignoring analytics dashboards post-launch

The chatbot is live, the project is closed, and the analytics dashboard sits unreviewed for months. This is the most common mistake among institutions that have made a serious procurement investment. The chatbot was supposed to reduce admissions workload — instead it answers questions poorly and nobody knows.

Why it happens. Post-launch ownership is not established. The project was run by digital or IT; ongoing management sits with neither team. Nobody is accountable for chatbot performance after the deployment sprint.

What the dashboard reveals. A working analytics dashboard shows conversation volume, top questions by frequency, resolution rate, escalation rate, and conversion events (open day registrations, form completions, application starts). A spike in questions about a specific topic — postgraduate funding, for instance — usually means a page on your website has changed, broken, or disappeared. The chatbot flags the problem before your admissions team does.

The fix. Assign a named owner for chatbot performance before go-live. Schedule a monthly 30-minute analytics review with the admissions director. Set an alert for any drop in resolution rate below 80% or any spike in escalation rate above 15%. These are your leading indicators that the knowledge base needs updating.

The JISC digital experience insights programme provides benchmarking data for digital student services in UK higher education — use it to contextualise your chatbot performance metrics against sector norms.

Mistake 4: Testing only internally before going live

The chatbot is tested by the project team, the admissions director, and a member of the IT department. All three know the institution well. Their questions are confident, their vocabulary is institutional, and their mental model of the website is accurate. Then real prospective students arrive.

Why it matters. Real prospects ask questions in their own language, not yours. They do not know the difference between a foundation year and an integrated master's. They confuse UCAS Track with the university application portal. They ask about "the business degree" without specifying the level or the pathway. Internal testers miss all of these because they unconsciously use correct terminology.

The gap this creates. A chatbot that performs well in internal testing and poorly in live use is a trust liability. The first week of live traffic exposes every knowledge gap that internal testing did not find — during the precise period when first impressions are forming.

The fix. Before go-live, run an external test with at least 15 people who have no familiarity with your institution — sixth-form students, contacts from partner schools, or members of the public. Ask them to use the chatbot to answer five specific questions drawn from your actual admissions enquiries. Log every unanswered or poorly answered question and fix it before you publish. The EDUCAUSE research base on digital student services consistently identifies external usability testing as the highest-return pre-launch activity for student-facing digital tools.

Mistake 5: Deploying without CRM integration

The chatbot is live and generating conversations. Prospective students are identifying themselves, stating their programme interest, and registering for open days. None of this information reaches your CRM. Your admissions team has no visibility of the pipeline being generated.

Why it matters. Without CRM integration, the chatbot operates as a black box. The conversations happen, the interest is expressed, and then the data disappears. The admissions team continues to manage the pipeline manually, unaware that the chatbot has already had a substantive exchange with dozens of prospective students now sitting in their UCAS queue.

The funnel impact. Institutions with well-configured AI chatbots reduce first-contact abandonment from 91% to 76%, generating 167% more first contacts (Source: funnel analysis of 30 institutions, 2025–2026 cohort). That uplift only translates into enrolments if the CRM receives the data and the admissions team can act on it. An integrated chatbot creates or enriches a prospect record in real time — name, email, programme interest, conversation history, escalation reason, and conversion events.

The fix. CRM integration should be a go-live requirement, not a post-launch enhancement. The main higher education CRM platforms — HubSpot, Salesforce, Dynamics 365, SITS, Ellucian — all support webhook or API connections. If your institution does not yet use a CRM, the chatbot dashboard functions as a lightweight alternative. But the data should flow somewhere structured from day one. For the full technical walkthrough, see our guide on how to integrate an AI chatbot into your school website.

Mistake 6: Failing to maintain the knowledge base regularly

The chatbot is correctly configured at launch. Six months later, the tuition fees have changed, a programme has been renamed, the admissions deadline has shifted, and a scholarship scheme has closed. The chatbot is still giving the old answers.

Why it happens. Knowledge base maintenance is not included in the original project scope. It is treated as a one-time configuration task rather than an ongoing operational responsibility.

The trust damage. A prospective student who receives incorrect fee information from your chatbot and then discovers the real figure on your website does not conclude that the chatbot was out of date. They conclude that your institution cannot be trusted to give accurate information. The reputational cost of a stale knowledge base extends well beyond the chatbot itself.

The fix. Build a quarterly knowledge base review into the admissions calendar. Cross-reference the chatbot's current answers against your live programme pages, fee schedules, and UCAS profiles. Pay particular attention to UCAS deadline dates, clearing parameters, and scholarship availability — these change every cycle. A 90-minute review four times a year prevents the most damaging stale-data failures. Assign the same person who manages your UCAS profile to own the chatbot knowledge base — their awareness of what changes and when makes them the natural fit.

Mistake 7: Underestimating UK GDPR and ICO compliance requirements

The chatbot is deployed with a generic privacy notice, a vague consent checkbox, and conversation data stored on a server with no clear documentation of where it is or who has access to it. For most institutions, this is an ICO investigation waiting to happen.

What UK GDPR actually requires. Any chatbot that collects personal data from prospective students — including minors enquiring about sixth-form college or foundation year routes — must comply with the UK General Data Protection Regulation. This is not sector guidance; it is the law. Key requirements include: a clear and specific lawful basis for processing conversation data, explicit consent before data collection, a published retention period, data minimisation (collect only what is necessary for the admissions purpose), and an operational right to erasure within 30 days of request.

The AI-specific layer. The ICO has published specific guidance on AI and automated decision-making that applies directly to student chatbots. Where the chatbot influences an admissions-related decision — routing a prospect, scoring their enquiry type, determining escalation — the institution may need to document the logic and provide an explanation to the data subject on request. The ICO's AI guidance is the authoritative reference; institutions should review it before deployment, not after a complaint.

The fix. Involve your Data Protection Officer before procurement is finalised, not at go-live. Require the chatbot vendor to provide a signed Data Processing Agreement, documentation of where conversation data is stored, and a confirmed data deletion process. Publish a specific privacy notice for chatbot interactions — a generic website privacy policy is insufficient for a service that actively collects personal data in real time. Prospective students should be informed at the start of each conversation that they are interacting with an AI assistant, and that their data will be processed in accordance with UK GDPR. This transparency requirement aligns with both ICO guidance and best practice standards published by JISC for higher education digital services.

For a comprehensive compliance checklist specific to UK higher education, see our chatbot RFP checklist for higher education.

Mistake 8: Not training admissions staff on the hybrid model

The chatbot is live and the admissions team has been told that the technology "handles the routine questions". Nobody has explained what that means in practice, which questions the chatbot cannot handle, what an escalation looks like when it arrives, or how to find a prospect's conversation history. The first escalation comes through as a bare email with no context, and the advisor has to start from scratch.

Why this matters. The chatbot's value in the hybrid model depends entirely on what happens at the handoff. A warm handoff — full transcript, programme interest, escalation reason, conversation history — saves the advisor time and gives the prospect a seamless experience. A cold handoff — a notification that "a prospect asked about your university" — is worse than no automation at all, because it creates the impression of engagement without the substance.

The TEF dimension. The Teaching Excellence Framework places weight on the quality of the student experience, including pre-enrolment contact. An institution whose admissions team cannot demonstrate responsive, informed engagement with prospective students — regardless of whether that first contact was automated or human — is not building the evidence base that QAA and OfS assessors expect to see.

The fix. Run a structured training session with the admissions team before go-live — not a generic product demo, but a session that covers: which questions the chatbot handles autonomously; which trigger types cause escalation; how to access and read the conversation transcript; how to update a prospect record after a human follow-up; and how to flag knowledge base gaps when the chatbot gives a wrong answer. Schedule a four-week post-launch review to identify where the handoff process is breaking down in practice. The admissions team's feedback from that review is the most valuable input your chatbot configuration will ever receive.

Response time: the metric that ties all eight mistakes together

Every one of these mistakes reduces the chatbot's ability to respond accurately and promptly. The baseline data makes the stakes clear: AI chatbot response time averages 3 seconds, 24/7 — compared to 47 hours for email (Source: Skolbot mystery shopping audit, 2025, 80 institutions). That 47-hour figure is the median. During UCAS Clearing in August, email backlogs are significantly worse.

The chatbot's competitive advantage — always-on, instant, accurate — disappears the moment the knowledge base is stale, the escalation path is broken, or the CRM is not receiving the data. The eight mistakes in this article are the eight ways that advantage is surrendered.

FAQ

How long does it take to build a chatbot knowledge base for a UK university?

For most institutions, the initial knowledge base takes two to three working days to prepare and validate. The majority of the content — fee schedules, entry requirements, programme descriptions, open day dates — already exists on your website and in your UCAS profile. The work is aggregation and validation, not creation. Allow an additional half-day for the admissions team to review the top 20 questions and confirm accuracy before go-live.

Who owns the chatbot knowledge base after launch?

Ownership should sit with the admissions or marketing team, not IT. The people who manage your UCAS profile and programme pages are the ones who know when fees change, when deadlines shift, and when a programme is renamed. They are the natural owners of the knowledge base. IT's role post-launch is maintaining integration and uptime — not content accuracy.

Do we need to notify the ICO that we are using a chatbot?

You do not need to notify the ICO specifically about deploying a chatbot. However, if your Record of Processing Activities (ROPA) does not currently include a chatbot data processing activity, it needs to be updated. If the chatbot makes or influences automated decisions about prospective students, you may also need to complete a Data Protection Impact Assessment (DPIA). Your Data Protection Officer should advise on both. The ICO's AI guidance provides the relevant framework.

Can a chatbot help with UCAS Clearing without specialist configuration?

Standard chatbot configurations are not optimised for Clearing out of the box. Clearing requires time-sensitive, institution-specific answers about amended grade thresholds and available places — information that changes hourly on results day. To use the chatbot effectively during Clearing, you need a rapid knowledge base update protocol in place before August, and escalation triggers configured for the higher emotional stakes of A-level results day. Institutions that do this well see materially better conversion of insurance-choice applicants.

How do we measure whether our chatbot deployment is actually working?

Five metrics cover the essentials: resolution rate (target above 85%), escalation rate (flag if above 15%), open day registration conversion rate (benchmark: 18% via chatbot), CRM data completion rate (is every conversation creating a usable prospect record?), and 7-day prospect return rate (benchmark: 34% of prospects who interacted with the chatbot return within a week). The EDUCAUSE research base provides sector benchmarks for digital student services performance that offer useful context for these figures.

Test your school's AI visibility for free Discover how schools improve their recruitment